In human–computer interaction, we have traditionally focused on making computers more intuitive to humans. Interface metaphors such as windows and folders were introduced to make computational structures easier to understand. By contrast, representations of the user have remained minimal, typically a cursor, a command-line focus, or a selection highlight. In this talk, I want to explore, in the context of XR, what kinds of user representations are possible and in which scenarios they are appropriate. Is an avatar that looks like me and mirrors all my movements always the optimal representation? Maybe not.

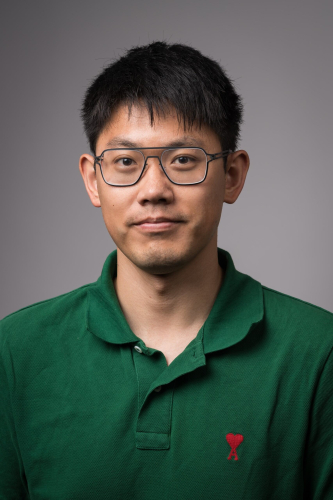

About Yukang

Yukang Yan is an Assistant Professor in the ROCHCI group in the Department of Computer Science, University of Rochester. Before that, he was a postdoctoral researcher in the Augmented Perception Lab, Human-Computer Interaction Institute at Carnegie Mellon University. He earned both his PhD and Bachelor’s degree from Tsinghua University. His research focus lies in Human-Computer Interaction and Mixed Reality. He published journals and papers at ACM CHI, ACM UIST, ACM IMWUT, IEEE VR, with ACM CHI 2020 Honorable Mention Award, ACM CHI 2023 Honorable Mention Award, IEEE VR Best Paper Nominee Award. His thesis won Outstanding Doctoral Thesis at Tsinghua University.

Date: Wednesday, February 18, 2026

Time: 3:30-4:30pm (EDT)

Location: Studio X - Carlson Library, First Floor & Zoom

The Voices of XR speaker series is presented by the Center for eXtended Reality, in partnership with Mary Ann Mavrinac Studio X, University Libraries. This series is made possible by Kathy McMorran Murray.